SparseIMU: Computational Design of Sparse IMU Layouts for Sensing Fine-Grained Finger Microgestures

01Digital Fabrication Technologies, Aditya, Adwait

(TOCHI 2022)

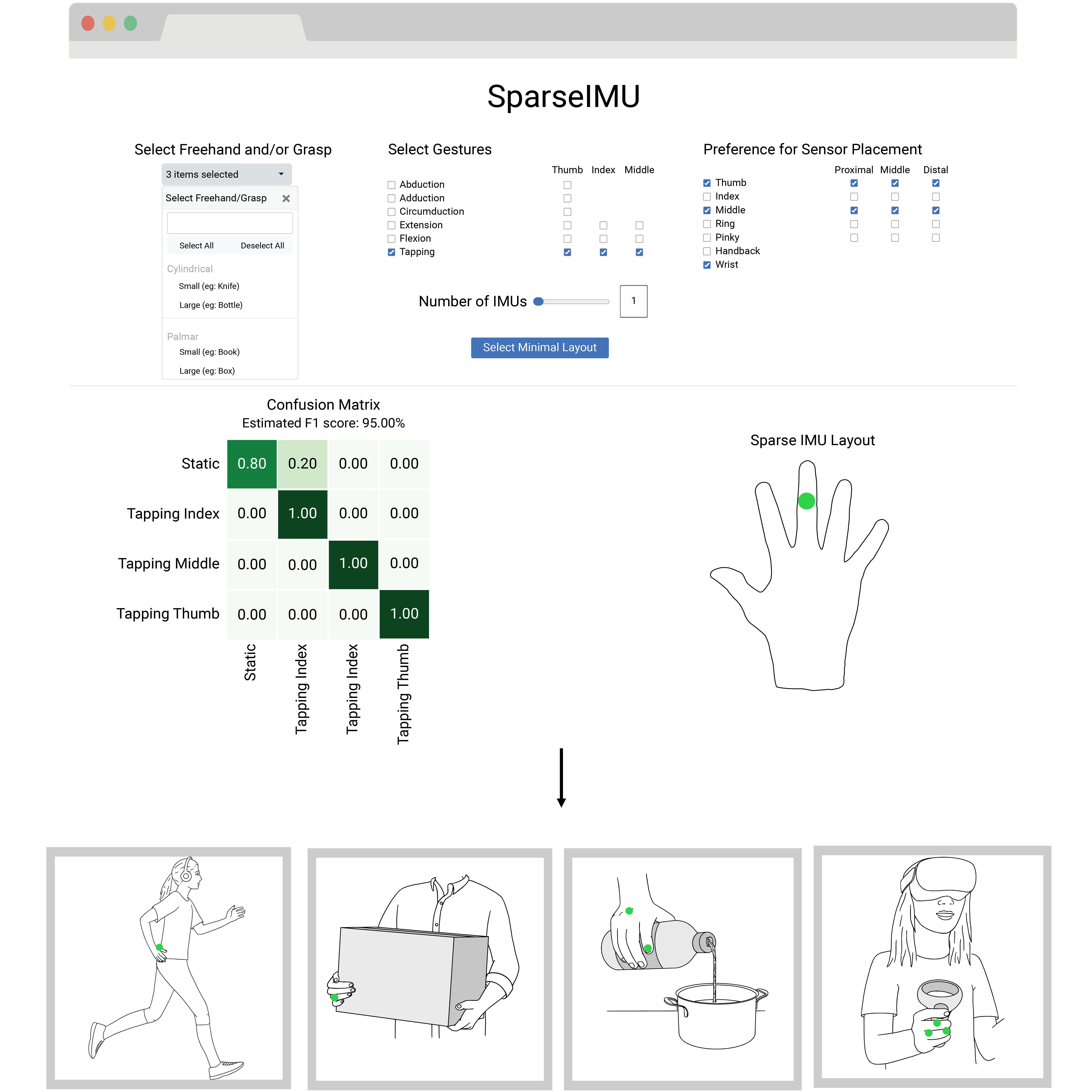

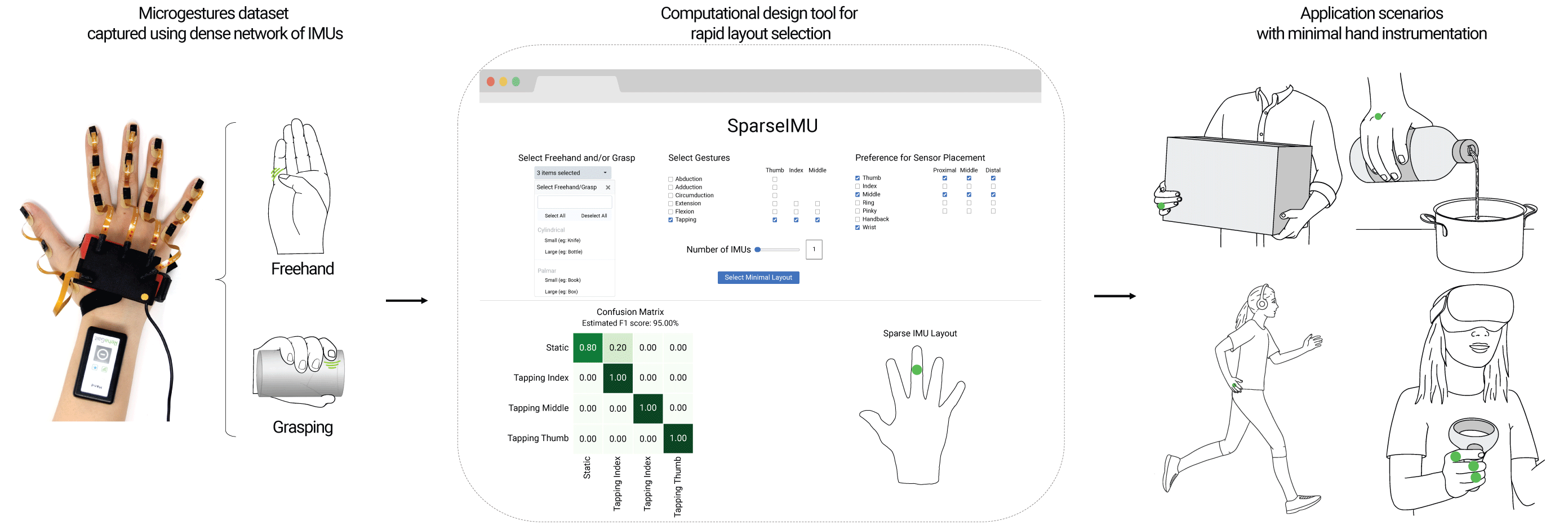

Gestural interaction with freehands and while grasping an everyday object enables always-available input. To sense such gestures, minimal instrumentation of the user’s hand is desirable. However, the choice of an effective but minimal IMU layout remains challenging, due to the complexity of the multi-factorial space that comprises diverse finger gestures, objects and grasps. We present SparseIMU, a rapid method for selecting minimal inertial sensor-based layouts for effective gesture recognition. Furthermore, we contribute a computational tool to guide designers with optimal sensor placement. Our approach builds on an extensive microgestures dataset that we collected with a dense network of 17 inertial measurement units (IMUs). We performed a series of analyses, including an evaluation of the entire combinatorial space for freehand and grasping microgestures (393K layouts), and quantified the performance across different layout choices, revealing new gesture detection opportunities with IMUs. Finally, we demonstrate the versatility of our method with four scenarios.

We present a method and graphical tool for rapidly selecting sparse IMU layouts that achieve a good trade-off between minimal instrumentation and high recognition accuracy. The tool allows Designers to specify high-level requirements (e.g., desired gestures and grasps), constraints (locations on the hand and fingers that should remain un-instrumented), and the total number of IMUs to be deployed. It then automatically selects an optimal sparse IMU layout matching the given preferences.

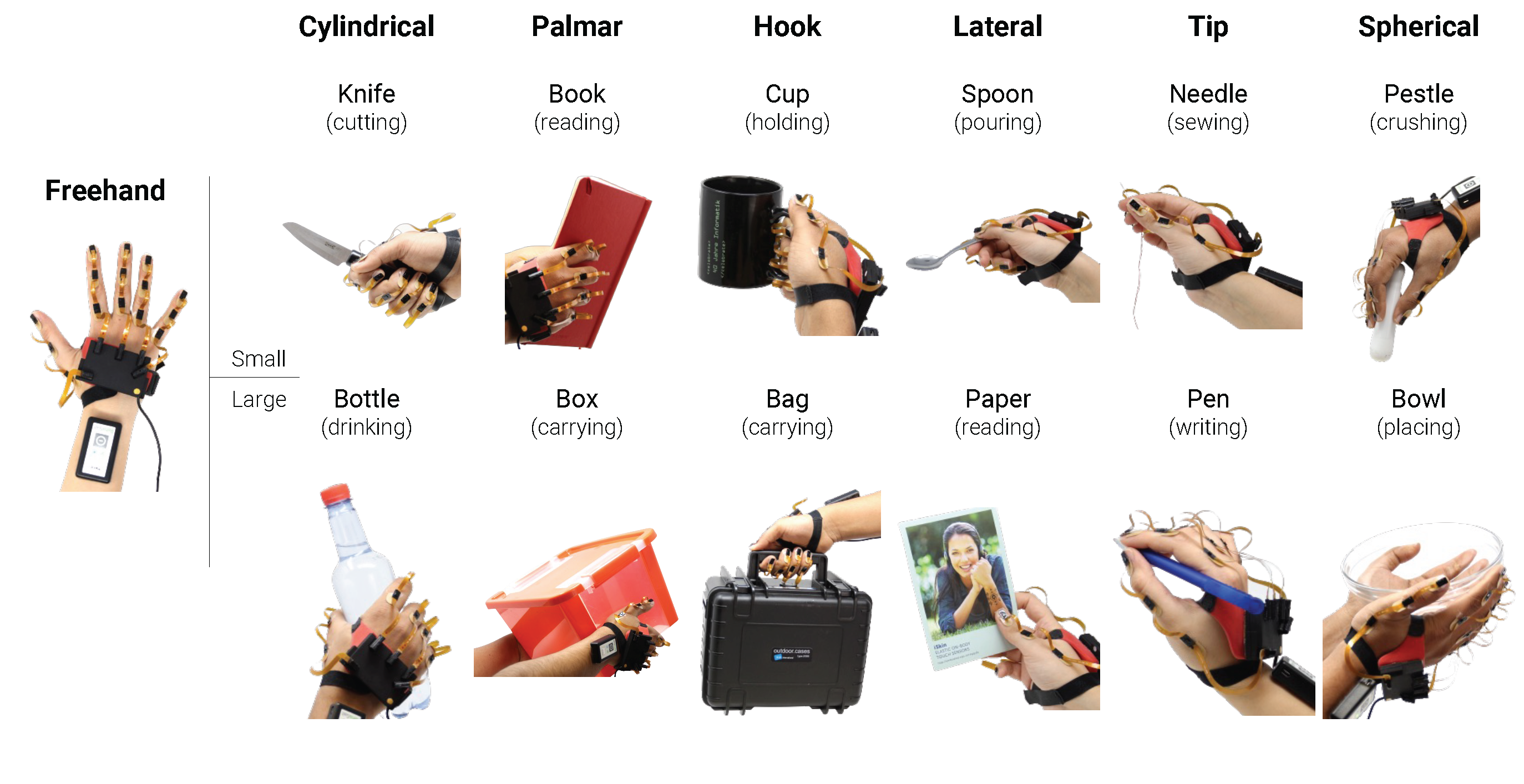

Using a dense network of 17 IMUs placed on the hand, the microgestures dataset was collected for Freehand and while Grasping 12 objects covering each of the six grasp types with two variations.

∂

The dataset includes six gestures performed with three fingers – Tap, Flexion, Extension, Abduction, Adduction and Circumduction. In addition, we captured three non-gesture classes: Static hold (just holding the object), Primary action performed while holding the object, and an Unscripted action where the user was free to perform any custom movement.

As mentioned in the article, the computational tool and the dataset of SparseIMU are available for download. It can only be used for scientific and/or non-commercial purposes.